A very interesting article was released on May, 23, 2018 by Will KNIGHT in the MIT Technology Review: The US Army is funding a project aiming to determine “deepreals” from “deepfakes”, i.e. to answer the question if a given video or audio is real or faked. Please read this very interesting and relevant article here:

https://www.technologyreview.com/s/611146/the-us-military-is-funding-an-effort-to-catch-deepfakes-and-other-ai-trickery

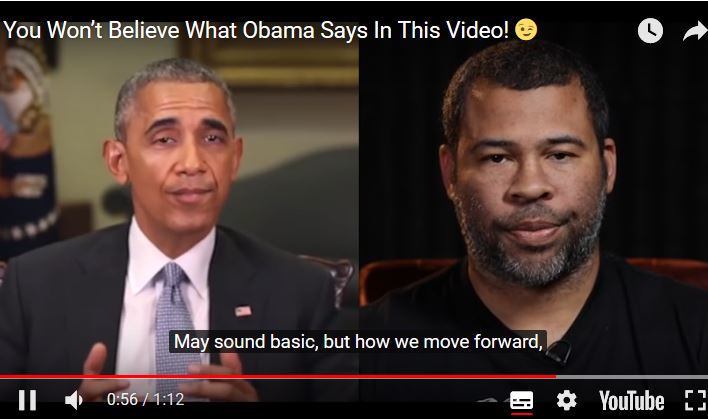

And please watch this amazingly convincing video, where you can immediately infer how important our topics are:

Interestingly the DARPA people admit that this is a difficult problem, particualrly as generative adversarial networks (GAN’s) are extremley powerful and it is hard for both AI and humans to check which piece of information is a fake and which one is trustworthy. While this was no issue in the past, and machine learning experts rather ignored it, the question: “can we trust the information given” is of raising importance in almost any domain. Trusted sources will be of utmost importance in the future, for us, for our countries and for our societies. See also our paper: from January:

Katharina Holzinger, Klaus Mak, Peter Kieseberg & Andreas Holzinger 2018. Can we trust Machine Learning Results? Artificial Intelligence in Safety-Critical decision Support. ERCIM News, 112, (1), 42-43. Online available here