From December 4 to 5, 2018 the WeAreDevelopers AI Congress Vienna will focus on human-machine interactions and will bring together two sides: Academy and Industry. The main questions tackled at this conference include: Can we trust computer decisions? How to deal with decisions bias? The Artificial Intelligence Congress emphasizes the importance of the interaction between human and computer. From trusting …

If a medical-ai-doctor-robot makes a wrong diagnosis – who is to blame?

Robert Hart, Cambridge graduate, discussed a very interesting, yet becoming increasingly important issue: WHO is to blame if a robot AI system makes a misdiagnosis? The current state of the art is that medical doctors are using AI today as decision support, definitely not as a replacement for standard procedures. However, what if technological progress keeps on and AI gets …

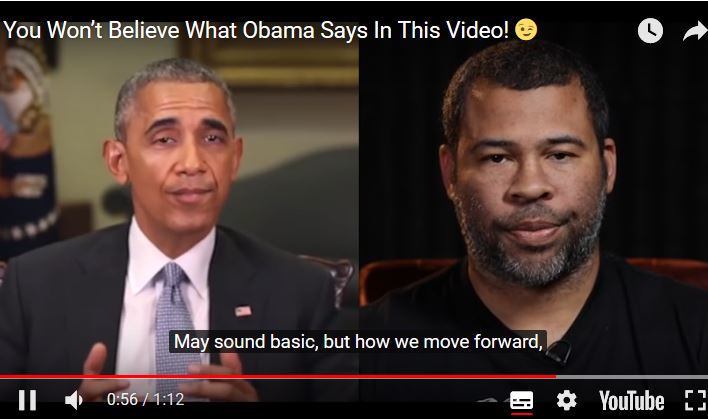

US military is funding an effort to catch deepfakes and AI trickery

A very interesting article was released on May, 23, 2018 by Will KNIGHT in the MIT Technology Review: The US Army is funding a project aiming to determine “deepreals” from “deepfakes”, i.e. to answer the question if a given video or audio is real or faked. Please read this very interesting and relevant article here: https://www.technologyreview.com/s/611146/the-us-military-is-funding-an-effort-to-catch-deepfakes-and-other-ai-trickery And please watch this …

Deepfakes = deep problem

As funny as it might sound to remove certain actors from movies – this is a serious and raising thread in the future. A couple of years ago it was difficult to manipulate videos, but meanwhile it is very easy and accessible to all to falsify videos with AI methods. That may result in questions as “can we trust what …

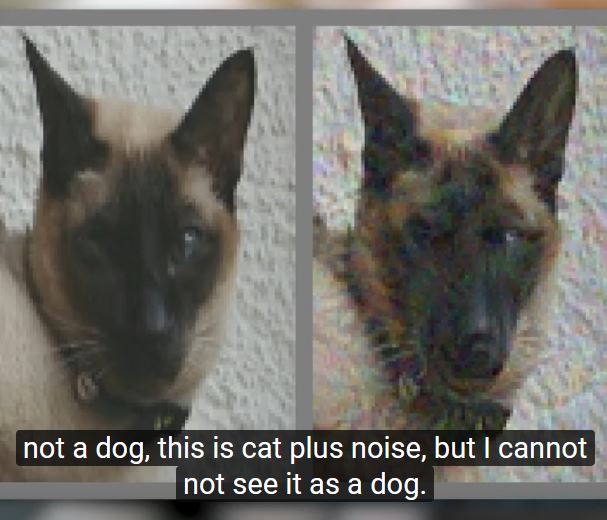

Adversarial Examples that fool both Humans and Computers

Recently an interesting paper has been published in ArXiV: Gamaleldin F Elsayed, Shreya Shankar, Brian Cheung, Nicolas Papernot, Alex Kurakin, Ian Goodfellow & Jascha Sohl-Dickstein 2018. Adversarial Examples that Fool both Human and Computer Vision. arXiv:1802.08195. However, maybe even more interesting is the lively debate on it via the 2-minutes papers by Karoly Zsolnai-Feher: The word adversarial – adversary (in …

Discern a Cat from a Dog – why is it so difficult?

A very nice explanation of the possibilities and limits of AI with focus on machine learning and how difficult it is to discriminate between a cat and a dog (starting at 4:17) and the video also clearly points out why causality is so important: One problem which humans can solve very good and where most AI fail is in transfer …

Limits of automatic reasoning

The Venus von Willendorf is an approx. 11 centimeter (4,4 inch) figurine made of limestone and painted with red ochre from a period of prehistory dated approx. 28,000 BC found in 1908 in a small place near Willendorf (Lower Austria) and is now located at the Natural History Museum in Vienna. It was recently banned from Facebook: https://www.telesurtv.net/english/news/Too-Hot-to-Handle-Facebook-Mistakes-Willendorf-Virgin-for-Porn-20180301-0026.html

CfP IFIP CD-MAKE-exAI – Workshop on explainable AI in Hamburg, August 27-30, 2018

I am involved in the organization of a workshop on explainable AI at the University of Hamburg, August, 27-30, 2018 Here the call for papers – please distribute as you like: — call for papers — MAKE-Explainable AI (MAKE – eXAI) IFIP CD-MAKE 2018 Workshop on explainable Artificial Intelligence Papers due to April, 1, 2018; Deadline (hard) for Springer LNCS: …

Explainability in Context – The Courts

A very interesting debate by Julius ADEBAYO (FastForward Labs), Paul RIFELJ (Wisconsin Public Defenders) and Andrea ROTH (UC Berkely Law), which was moderated by Amanda LEVENDOWSKI (NYU School of Law) on April, 27, 2017 at the Information Law Institute at the New York School of Law.